Less noise.

More signal.

OMNI is a smart terminal layer that intelligently filters and prioritizes command output before it reaches your AI agent.

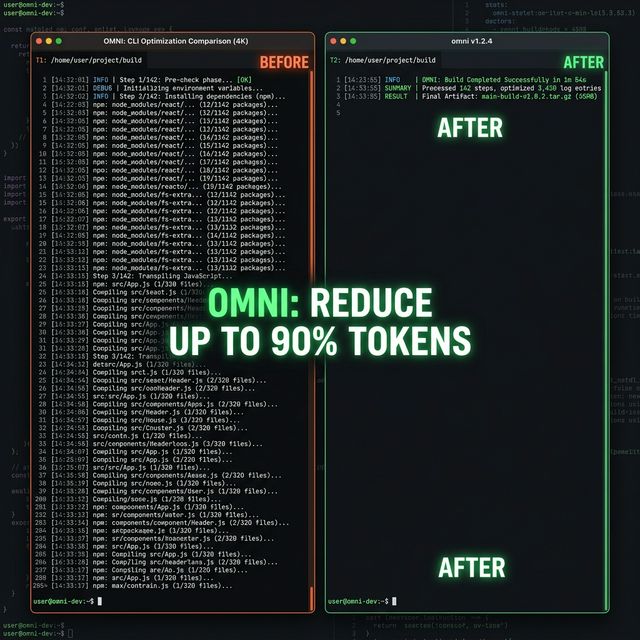

Cut token consumption by up to 90% and give your AI the clean context it needs to reason better.

Fully transparent. You're always in control.

brew install fajarhide/tap/omni The Philosophy of Context Quality

AI agents like Claude are only as smart as the context you feed them.

The Problem: Noise & Cost

When autonomous AI agents read your terminal, they read *everything*. A simple npm install dumps ~15,000 tokens of useless junk into your AI's context.

- Extremely Expensive: You pay real money for every single token of junk output.

- Diluted Reasoning: Critical errors get buried. The AI becomes "dumb" trying to sift through garbage.

- Model Lock-in: You're forced to use the most expensive flagship models just to have a context window big enough.

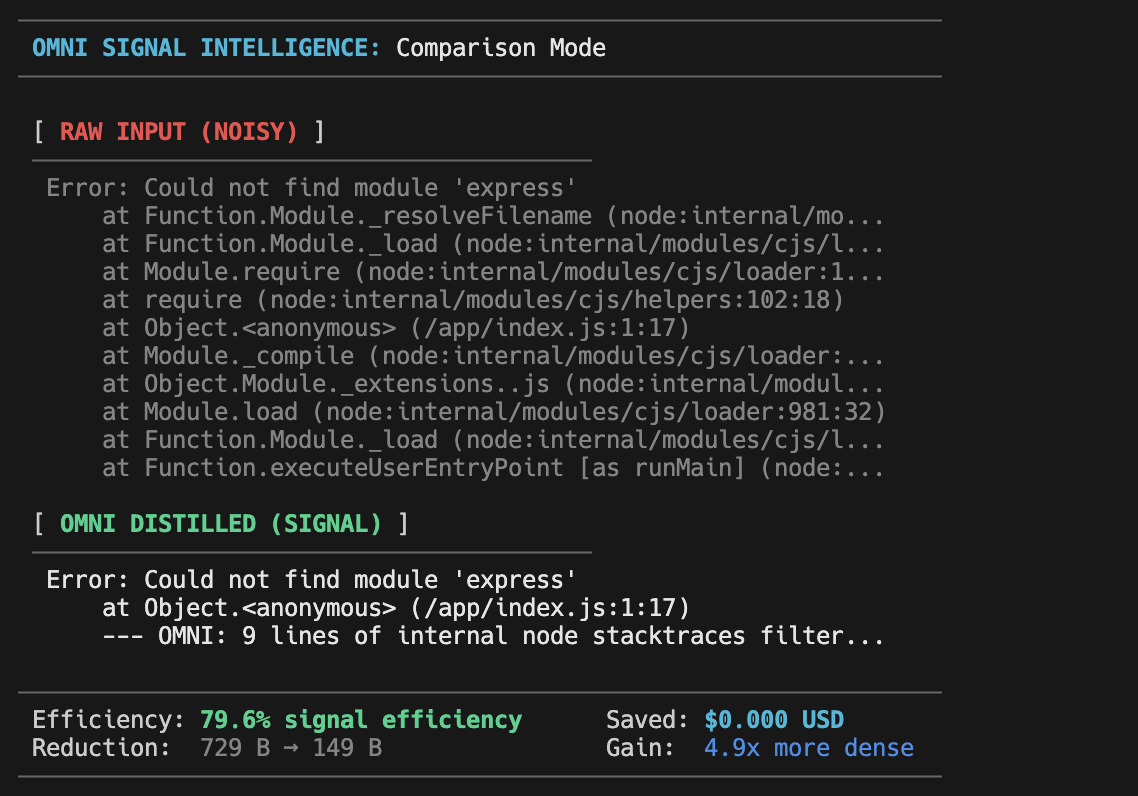

The Solution: Pure Signal

OMNI acts as a smart sieve between your terminal and the AI, feeding it only the highly-focused, straight-to-the-point marrow.

- No More AI Confusion: Your AI stops getting confused by noisy output and focuses directly on the real problem.

- Stop Re-Prompting: Get accurate answers faster without having to constantly re-explain the context.

- 90% Token Reduction: Drastically cut your agentic API bills by not paying for useless junk.

Feel the Difference

See exactly how much semantic space you save.

The Breakthrough Layer

A native, invisible utility belt explicitly built for the Agentic AI era.

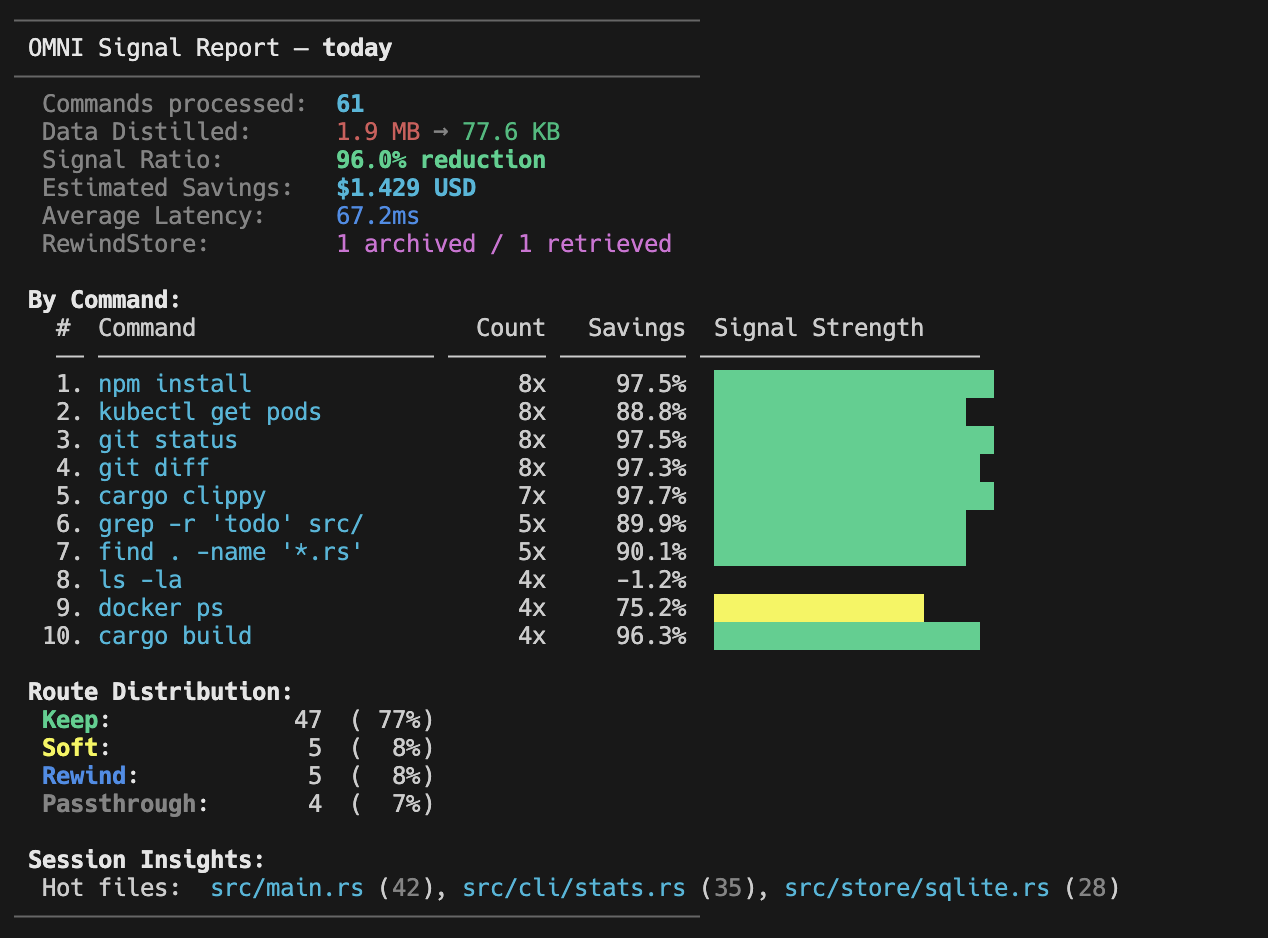

Distill Monitor

Run omni stats to track lifetime token savings and ROI across all your projects.

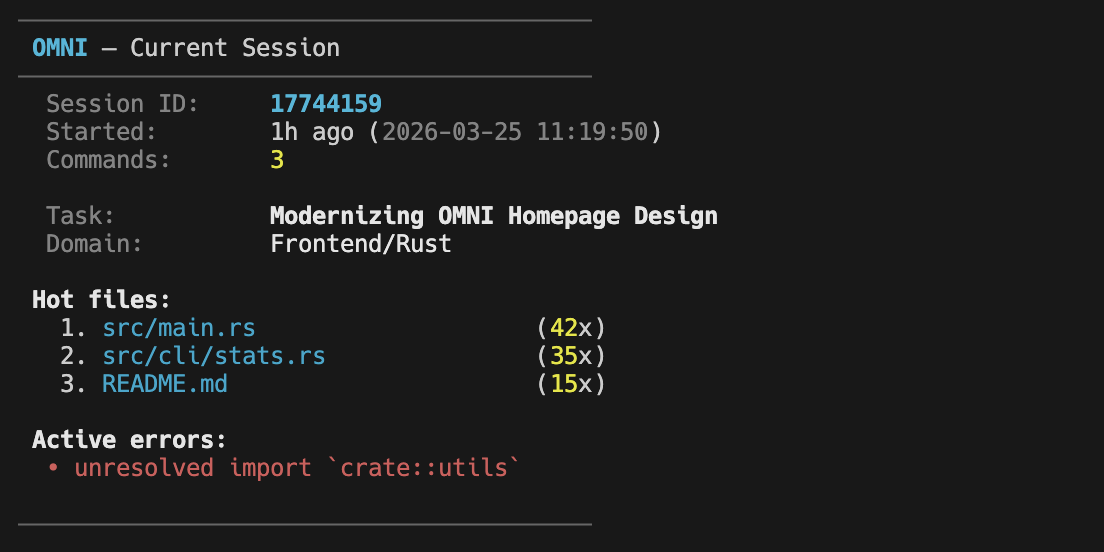

Session Intelligence

OMNI tracks hot files and active errors across your workspace. It remembers what the AI knows, injecting contextual continuity into every invocation to save even more tokens.

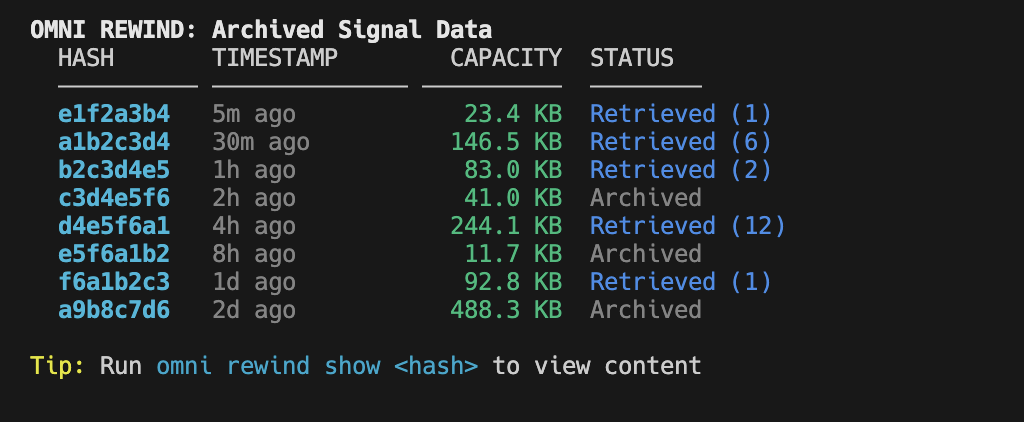

RewindStore - Zero Signal Loss

Worried OMNI filtered something important? Built-in RewindStore saves raw output locally via SHA-256. If the AI needs the full log, it can retrieve it instantly.

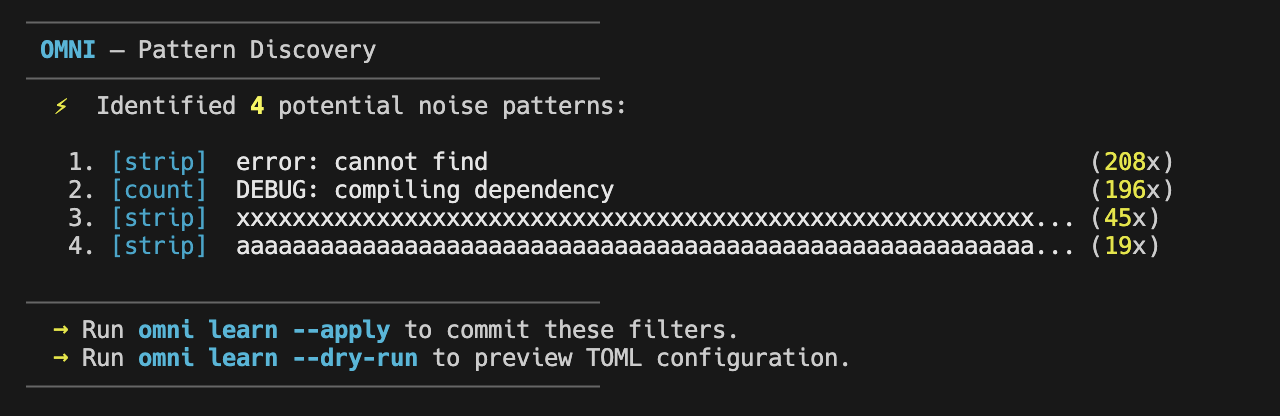

Omni Learn - Autonomous Discovery

Analyze your terminal history to identify noise patterns and generate optimized semantic filters automatically.

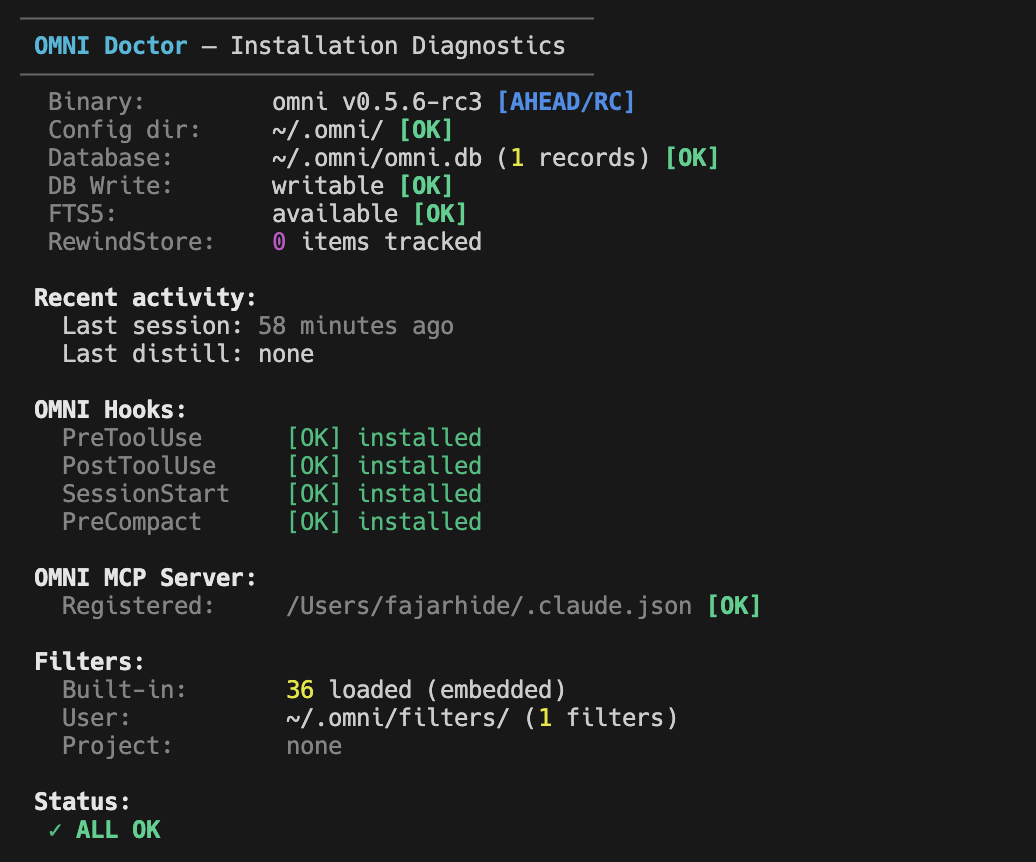

Omni Doctor: Self-Healing Config

A proactive health check utility that scans your filters, dependencies, and environment for issues, providing instant --fix solutions.

Core Engine & Versatility

Built with a Native Rust Core for sub-millisecond execution. OMNI provides universal support for Unix/Windows and Heimsense integration for pinpoint accuracy.

Command-First Architecture

The world's first context-aware terminal signal pipeline. Built for the Agentic era.